The Technology

The Internet routes packets.

FrogNet routes meaning.

A self-forming mesh of autonomous computers that think together when they can and think alone when they must. Full web applications over any transport — including a 4800-baud narrowband link with 20% packet loss.

FrogNet is

A self-forming mesh of autonomous computers. Every node is a full LAMP stack with a semantic proxy and daemon in front. Nodes discover each other over any IP transport, compress application traffic by learning what's static and only shipping what changed, and keep working when the mesh fragments. The Internet is optional.

FrogNet is not

Not a VPN mesh (Tailscale, Nebula, WireGuard). Those give you an encrypted IP fabric — FrogNet also gives you the applications, the compression, the database, and the ability to run offline. Not a radio mesh (Meshtastic, Reticulum, AREDN). Those move packets or short messages — FrogNet moves full HTTP and delivers real web apps down to the narrowband rates where other stacks give up. Not a blockchain, not a cryptocurrency, not a CDN, not a dark-web overlay, and not "just a faster WireGuard." And it is deliberately not a transport for amateur (ham) radio — see the FAQ for why.

Sovereign nodes Every node is a complete, autonomous system.

A single FrogNet node, completely disconnected from everything else, is still a functioning web server, database server, DNS server, DHCP server, and application platform. When nodes discover each other, they form a mesh automatically. When the mesh fragments, each fragment continues operating independently. When fragments rejoin, the mesh reconverges without reconciliation. Connectivity is a coordination channel — not a prerequisite.

Every node runs four cooperating processes: the semantic proxy on port 80 (HTTP interception, template extraction, SAME/DIFF caching), the semantic daemon on port 9009 (HTTP execution, semantic decoding, response compression), Apache on port 8080 (LAMP application server, PHP endpoints, dashboards), and MySQL on port 3306 (template store, semantic cache, transient database). Each node owns a /24 subnet and its canonical identity is its .1 address on that subnet.

Any transport The radio is just the pipe. What runs on top is the point.

FrogNet is an application layer. It runs a full sovereign stack on every node — web server, database, DNS, DHCP, sensor ingest, actuator control, semantic compression — and it treats the transport underneath as a replaceable commodity. Fiber, WiFi, Ethernet, WireGuard tunnel, LoRa, satellite, narrowband RF: the application doesn't know and doesn't care which one is carrying its bytes. The network finds its own path.

Currently running in production: WiFi, Ethernet, and WireGuard tunnels over the public Internet — meshing Seattle, New York, and Amsterdam.

Validated in the lab: narrowband operation down to 4800 baud with 20% packet loss, measured on Ethernet-to-Ethernet links shaped with delay, jitter, and loss to match real RF channel conditions. Same application stack, same wire protocol, same measurements that would apply to any physical medium running at that rate.

Active integration with external mesh technologies: Meshtastic and MeshCore are on our radar for both roles FrogNet can play. As a data endpoint, a small RF bridge receives messages from a Meshtastic / LoRa / MeshCore network and writes them directly into FrogNet's transient database — which is what's happening today with the ESP32 sensor stream arriving from New-York-1. As a transport, FrogNet's semantic layer rides over the RF network as the bearer. Same architecture, different seam.

The one transport FrogNet deliberately avoids: amateur (ham) radio. FCC Part 97.113(a)(4) prohibits "messages encoded for the purpose of obscuring their meaning" on the amateur bands, and BLDC-1's template-dictionary deltas can't be distinguished from a cipher by a listener who doesn't hold the dictionary. We work with the amateur emergency-comms community over other links, not over the amateur bands themselves. See the FAQ for the full reasoning.

The WireGuard tunnel broker manages tunnel lifecycle using a pond/chorus model. Nodes register, discover peers, and establish encrypted tunnels automatically. The broker is the only component that touches the public Internet — and it is severable.

Semantic compression BLDC-1: send only what changed.

BLDC-1 (Binary Layer Delta Compression) operates on structured message streams. For each request, the target node computes the full response locally, then compares it field-by-field against the previous response for the same request type. Only the comparison result crosses the wire — not the response itself.

SAME: Nothing changed. The wire cost is a single SAME signal. The receiver reconstructs the full response from its local cache. Zero content bytes transmitted. In production, 65% of all responses are SAME.

DIFF: Something changed. A DIFF frame carries only the changed fields and their new values. The receiver patches its local cache and reconstructs the full response. A 10KB response with one changed field costs bytes, not kilobytes.

FULL: First transmission of any request type is always FULL — the complete response establishes the baseline. Every subsequent transmission is either SAME or DIFF. Templates are learned automatically from real traffic — the system observes HTTP exchanges and learns which fields are static and which are dynamic.

FNW1 wire protocol Dedicated channels between proxy and daemon.

FNW1 carries BLDC-1 frames between proxy and daemon over dedicated socket channels. Frame format: [frame_len:4][FNW1:4][op:1][payload...]. Message types: REQ_FULL (0x01), REQ_REPEAT (0x02), REQ_DIFF (0x04), RESP_SAME (0x13), RESP_DIFF (0x11), RESP_RAW (0x14), HELLO (0x50).

All frames carry a 4-byte big-endian length prefix. The daemon uses 256-worker thread pools and sequence-tagged frames for ordering guarantees. Up to 128 requests can fire in parallel through the proxy/daemon socket pair, fully utilizing the channel for operations like network discovery.

These are not open connections on well-known ports. They are purpose-built socket pairs between known peers, carrying only FNW1 frames. The proxy/daemon pair authenticates at the HELLO handshake and rejects anything that doesn't speak FNW1. Security is enhanced as a direct consequence of the protocol design — the attack surface is the protocol itself, and the protocol is purpose-built and minimal.

Transient database Shared state without distributed consensus.

The transient database is a single authoritative store that any node on the network can read from or write to — without direct addressing, without simultaneity, without Internet dependency. It is the integration boundary for everything on the mesh: physical sensors write state, applications read it, actuators respond to it.

No distributed consensus — this is an intentional design decision. Distributed consensus over degraded radio links is not feasible. A consensus round that takes 30 seconds over a flaky HF link would block all operations. Single host, universally accessible. Persistent-until-replaced. Last write wins. When the host shifts, the window resets and clients continue.

All database access goes through api.php, a generic REST-style API. Entity names map to MySQL tables. The API is identical on every node — applications don't need to know which node they're talking to. databasehost.frognet resolves to the highest-IP active node automatically.

This is why it's called the Living Network. The transient database is a living picture of everything happening on the mesh — every sensor reading, every node heartbeat, every compression metric, every link state — updated in real time by every node that touches it.

Sensor platform Physical sensors and actuators as network participants.

The sensor platform is not part of the FrogNet host — sensors and their concentrators are simply machines on the network. They write to the transient database through the standard API, and any application on any node can read that data. The FrogNet node provides the networking fabric; the sensors are applications that happen to be on that fabric.

ESP32 nodes connect via Meshtastic RF bridge. The bridge writes incoming packets into the transient database as sensor entries. From that point, the entire FrogNet application stack — dashboards, AI, compression engine — treats them as native data. Live sensor types today: photovoltaic/luminosity, actuator position and state.

Actuator control is bidirectional. An application writes an actuator command to the transient database. The actuator node reads on its next cycle and executes. A photovoltaic sensor under controlled load provides closed-loop confirmation. Full closed loop demonstrated: button press → transient DB write → actuator fires → lamp state changes → sensor reads change → table updates.

AI host platform Local AI as a network participant — not a co-located service.

Like the sensor platform, the AI host is not part of the FrogNet node itself. It is a separate machine on the network with read/write access to the transient database. It sees the same shared state every other application sees — but it can act on it autonomously.

An AI on the mesh sees every physical sensor simultaneously — temperature, motion, power, RF signal strength, luminosity, actuator position. It sees every soft sensor — traffic patterns, node health, connection latency, compression ratios, uplink status. Not from logs analyzed after the fact. From the live state of the network as it happens.

It detects anomalies the moment they appear. It responds by writing back: isolating a compromised node, rerouting traffic, severing the Internet uplink on detection of active intrusion. Because the AI runs locally on the mesh, it operates at full capability whether the Internet uplink is up, degraded, or intentionally cut.

The defender is always present. The attacker loses their window the moment connectivity drops.

Current deployment Running. Now.

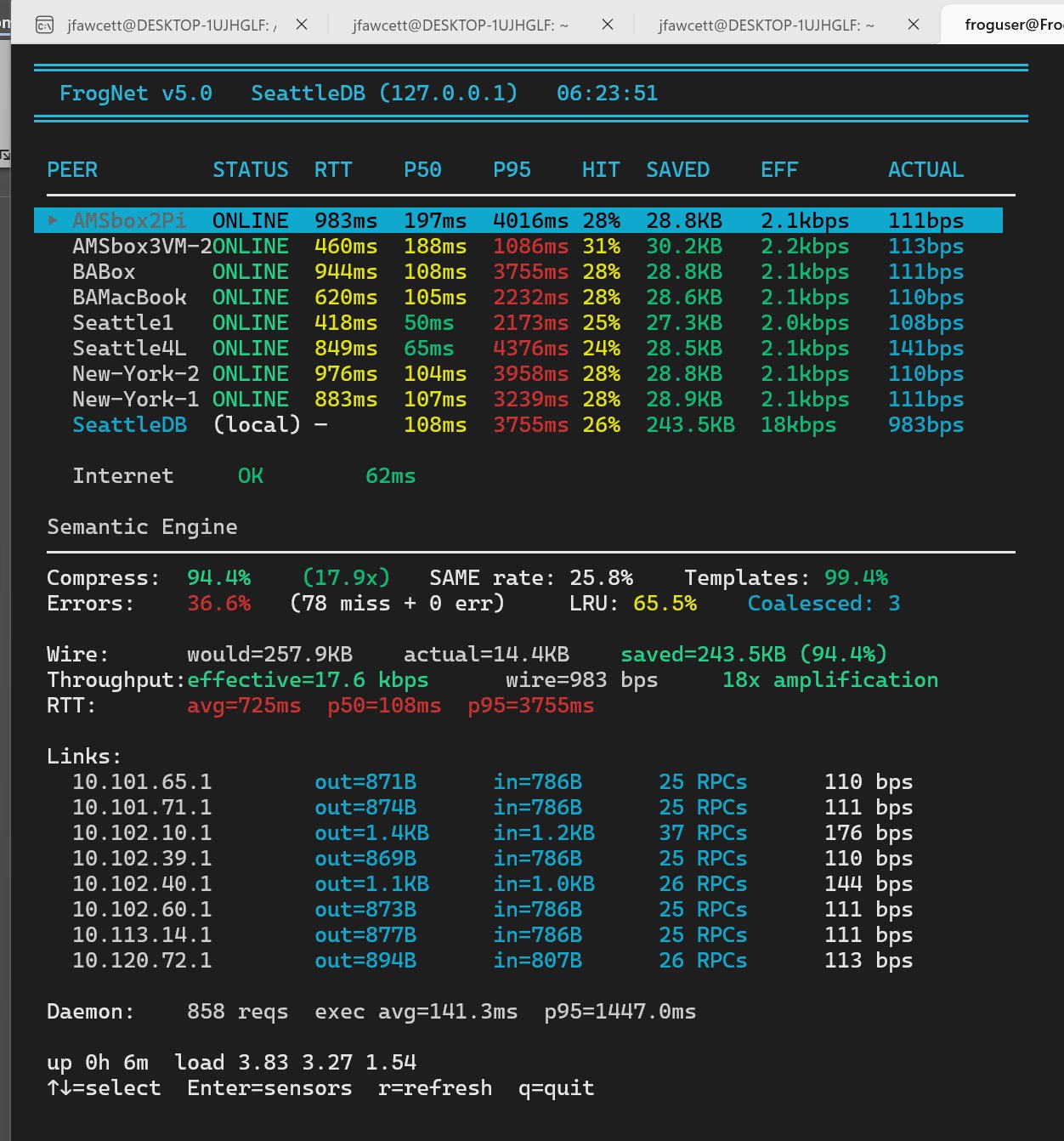

Ten nodes across three cities. In Seattle: Seattle1 (the tunnel endpoint for the cluster), SeattleDB, Seattle3, and Seattle4L. In New York: New-York-1, New-York-2, BABox, and BAMacBook. In Amsterdam: AMSbox2Pi and AMSbox3VM-2.

WireGuard tunnels carry the transatlantic legs and the coast-to-coast links. Seattle3 and Seattle4L ride on top — they reach Seattle1 over WiFi, and Seattle1 routes the rest of the world to them. Same mesh, one more transport layer, zero application changes. Hardware is a mix of Raspberry Pis, small x86 boxes, and a MacBook.

Live capabilities: Physical sensor telemetry via ESP32/Meshtastic RF bridge. Bidirectional actuator control with closed-loop confirmation. Live node topology and tunnel status dashboard. Semantic compression metrics in real time. A domain expert with no prior FrogNet experience integrated real hardware in days.